I Built an AI App in 4 Months, Not 4 Hours. Here's What the "Vibe Coders" Won't Tell You.

What 20 years of software development and a decade of MarTech consulting taught me about using Claude Code, and why the "build an app in an afternoon" crowd is selling you a fantasy.

You've seen the tweets. "I built a $10K MRR app in a weekend with zero coding experience." Screenshots of ChatGPT conversations and working prototypes. LinkedIn posts about how AI has democratized software development and anyone can ship a product now.

I believed it too. Sort of.

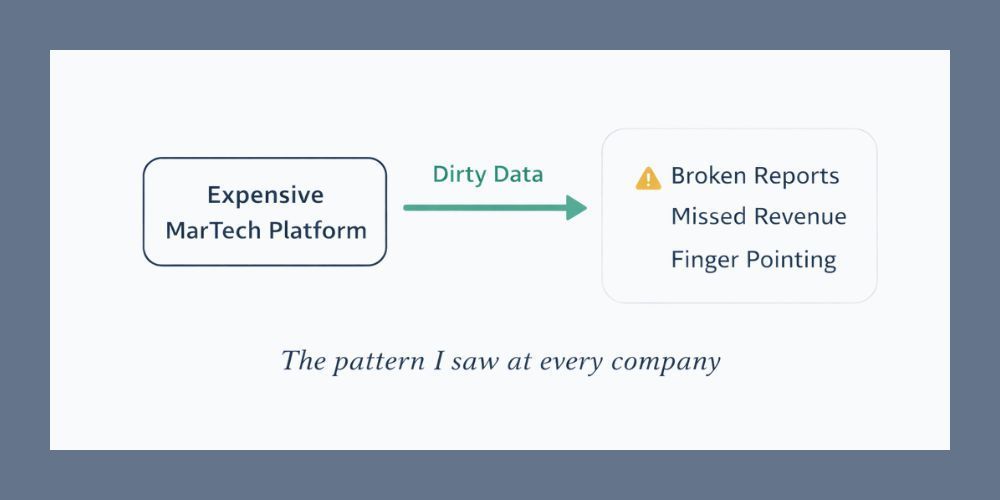

In mid-August 2024, I started building CleanSmart, an AI-powered data cleaning platform that handles semantic duplicate detection, multi-source merging, and confidence-based automation. By mid-December, I had a working beta.

Four months. Not four hours.

And here's the part the "build an app in an afternoon" crowd won't tell you: my information systems degree and 20+ years of software and website development experience weren't optional. They were the reason I finished at all.

But that's only half the story. The other half is why I built it in the first place.

A Decade of Watching Good Systems Fail

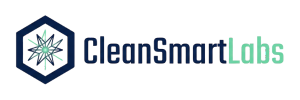

Before I wrote a single line of CleanSmart code, I spent over ten years as a MarTech consultant helping companies implement CRMs, marketing automation platforms, and customer data platforms. Salesforce. HubSpot. Marketo. Segment. I've configured them all.

Here's what I learned: these systems are only as good as the data you feed them.

I'd sit in kickoff meetings with marketing and sales teams, excited about their new platform. Six figures spent on licensing. Months of implementation ahead. And then we'd pull their customer data and find the same problems every single time.

Duplicates everywhere. "John Smith" and "Jon Smith" and "J. Smith" all living as separate records. Phone numbers in twelve different formats. Email addresses with typos that would never get caught. Company names spelled three different ways across three different systems.

The CRM wasn't broken. The marketing automation wasn't broken. The data was broken.

I'd watch companies spend $200K on a Salesforce implementation, then wonder why their sales team still couldn't trust the pipeline numbers. The answer was always the same: garbage in, garbage out. No amount of automation fixes dirty data. It just automates the mess faster.

After years of telling clients "you need to clean your data first," I got tired of not having a good answer for how. The tools that existed were either too technical for marketing ops teams to use, too expensive for growing businesses to afford, or too basic to catch the duplicates that actually mattered.

So I built the tool I wished I could have handed to every client I ever worked with.

The Product That Can't Be Built in a Weekend

Let me tell you what CleanSmart actually does, because this matters.

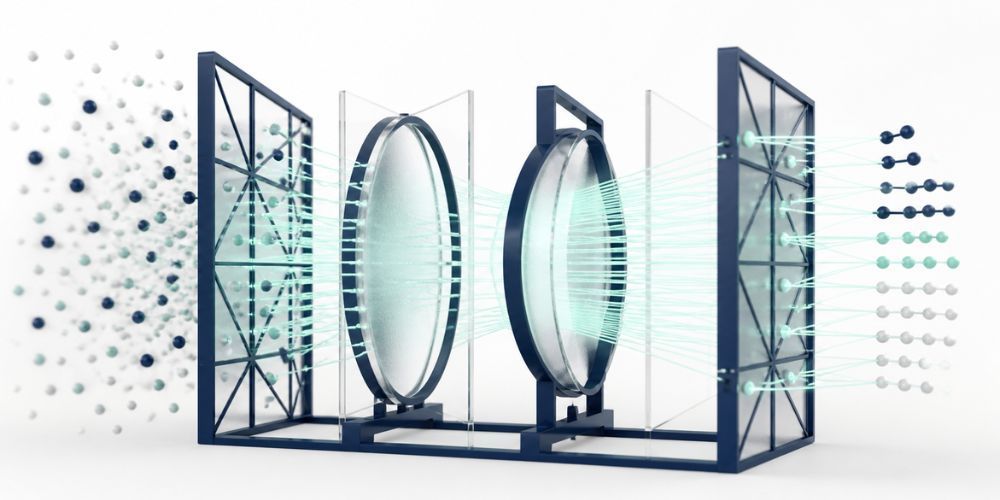

When businesses try to merge customer data from Salesforce and HubSpot, they hit a wall. "John Smith" in one system and "Jon Smith" in the other. Same person, but traditional string matching treats them as two different records. Your sales team chases the same lead twice. Your marketing campaigns blast duplicates. Your analytics lie to you.

I've seen this exact scenario at dozens of companies. The sales VP pulls a pipeline report and the numbers don't match what marketing sees. Finance can't reconcile customer counts across systems. Everyone points fingers. And the root cause is always the same: the data was never unified properly in the first place.

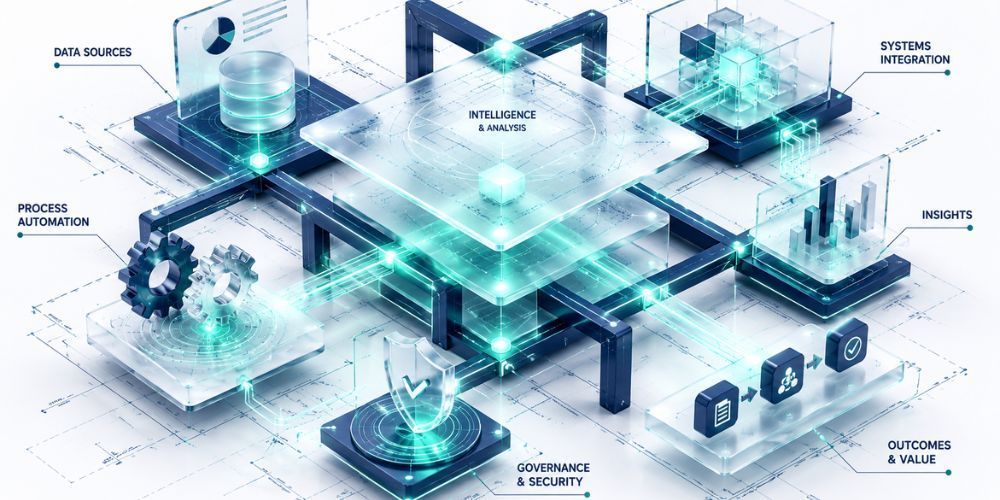

CleanSmart uses sentence transformers for semantic similarity matching. It runs Isolation Forest algorithms for anomaly detection. It calculates confidence scores for every automated change and routes low-confidence decisions to humans for review. The architecture includes a hub-and-spoke system for multi-source merging with customizable conflict resolution strategies.

None of this is "tell Claude Code what you want and watch it build." This is systems architecture. Data flow design. User experience decisions that require understanding how actual humans interact with software.

The semantic matching alone required me to know that sentence transformers exist, that all-MiniLM-L6-v2 is the right model for this use case, and that simple Levenshtein distance would miss the duplicates that matter most. Someone doing "vibe coding" on a weekend wouldn't know to ask for any of that. They'd end up with an app that confidently calls Robert and Bob two different customers.

I knew to ask for it because I'd spent years watching exactly that problem destroy the ROI of million-dollar MarTech investments.

The Timeline Nobody Wants to Hear

From mid-August through the end of October, I worked on CleanSmart about eight hours a day. Full-time, focused development.

Then November hit. I picked up a contract job and could only dedicate 10-12 hours a week to CleanSmart. The beta launched mid-December.

That's roughly 2.5 months of intensive work plus 6-7 weeks of part-time effort. And within that timeline, I burned 2-3 full weeks on rework because I skipped steps I knew better than to skip. Roughly 15-20% of my intensive development phase, gone because I let the speed of the tool convince me I could shortcut the fundamentals.

The hype makes you feel like careful planning is optional when Claude can "just build it." That's the trap.

The Prototype That Missed the Point

Here's where it got expensive.

I started with a quick prototype in Bolt. It looked great. Had the basic structure. I thought I had everything I needed to start the real development.

I was wrong.

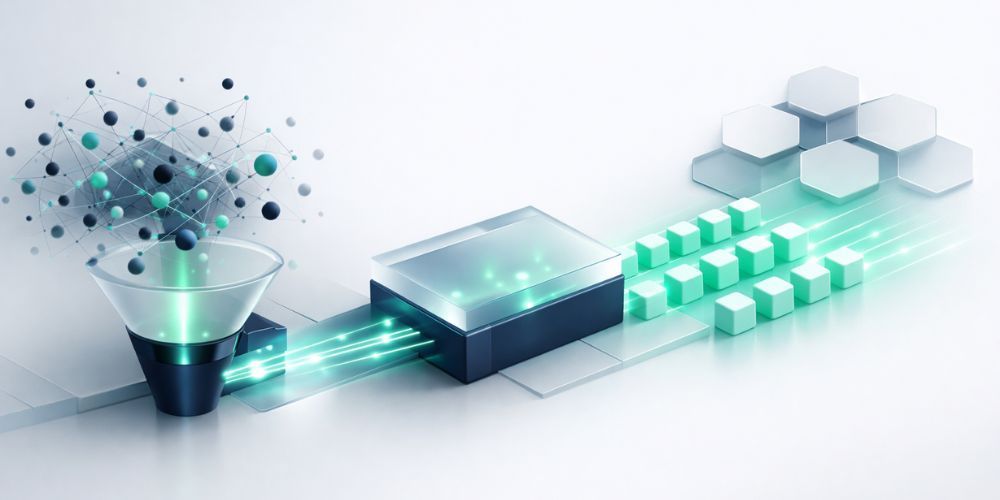

The prototype completely missed the user review step for AI-generated changes. CleanSmart's entire value proposition hinges on a confidence scoring system. High-confidence changes happen automatically, low-confidence changes require human approval. That human-in-the-loop workflow? Non-existent in my prototype.

Someone without years of building software might have shipped the "automate everything" version and wondered why users didn't trust it. I caught the gap because I've been through enough user testing to know that people need control over AI decisions that affect their data.

But I also caught it because of something I learned in consulting: ops teams don't trust black boxes. Every marketing ops manager I ever worked with wanted to see exactly what changed before it went live. They'd been burned too many times by automation that "fixed" things in ways that broke their campaigns. CleanSmart had to show its work, or nobody would use it.

The fix required rearchitecting significant portions of the application. Weeks of rework that proper upfront design would have prevented.

What the Prototype Got Right

The prototype wasn't a total loss, though. It was good enough to demo.

I recorded a video walkthrough of myself using the prototype and shared it with potential users. Not to sell them anything. To ask questions. What features matter most? What integrations do you need? How does this interface feel? What would you pay for something like this?

That feedback shaped everything that came next.

The people who responded became my informal advisory group. They helped me prioritize which features to build first, what the product roadmap should look like, and what pricing the market would actually bear. When the beta launched, they were the first to get access (free, as a thank you for helping me build something people actually wanted).

This is the part of product development that "build an app in an afternoon" skips entirely. Claude Code can generate features fast. It can't tell you which features matter to customers, what they'll pay, or whether your interface makes sense to anyone besides you. That requires talking to humans before you write production code.

Years of consulting taught me that the features you think matter and the features customers actually use are rarely the same. I'd watched too many products fail because the builders never asked.

The prototype was too flawed to ship. But it was perfect for learning.

What Claude Code Actually Requires

The people selling "no code experience necessary" are either lying or building toys.

Claude Code requires you to treat it like a developer on your team. A skilled one, sure. But a developer who needs clear requirements, defined user flows, and explicit expected outcomes. When I approached Claude like a magic wand that could interpret vague intentions, things broke.

The biggest mistakes happened when I assumed Claude understood user interactions as well as I did. Twenty years of watching people interact with software, sitting through usability tests, seeing where designs fall apart in the real world. Claude doesn't carry any of that. I needed to be explicit about how users would interact with each feature, or we'd design too narrowly or too broadly.

I also needed to translate a decade of domain knowledge into prompts. What does a RevOps manager actually need to see when reviewing duplicate matches? What fields matter most when merging customer records? How do you handle the edge case where two records have conflicting email addresses but the same phone number? Claude didn't know any of this. I did, because I'd lived it with clients for years.

When I didn't provide that context, we'd go down the wrong path. Sometimes for days.

Debugging alone could take forever in the early months. Something would break, Claude would fix it, and that fix would break something else. Days of iteration to solve problems that felt like they should take minutes. The tool improved dramatically from August to December (or I got better at prompting, or both), but those early weeks were a slog, not a sprint.

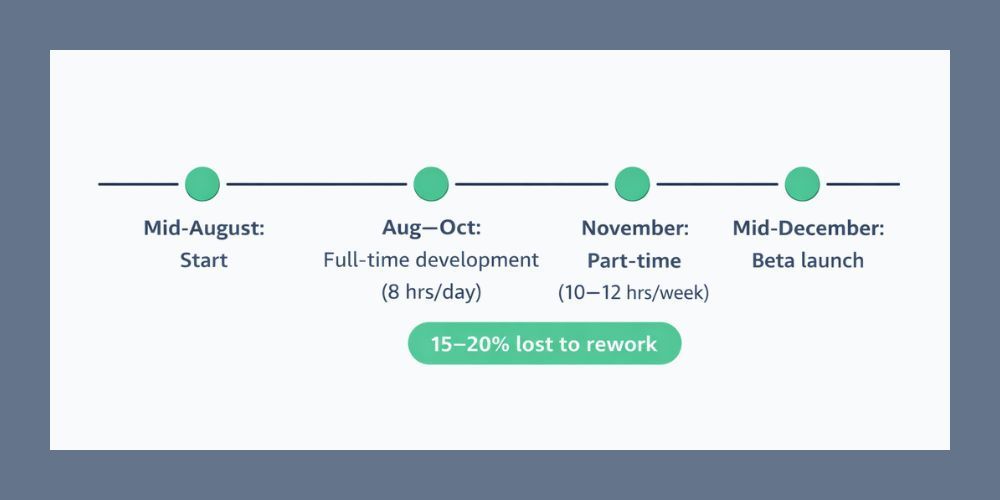

The Architecture Directive I Wish I'd Created Sooner

Four weeks into the project, I started using Codex to run code reviews.

The results were humbling. Claude Code was generating code that worked, but Codex kept flagging the same issues. Inconsistent patterns across files. Security practices I'd normally enforce slipping through. Frontend logic bleeding into places it didn't belong. The kind of entropy that makes a project unmaintainable six months down the road.

I could keep fixing these issues one by one after Codex caught them. Or I could solve the root problem.

So I stopped building features and created a 13-section architecture directive. Not instructions for a feature. A complete operating system for how Claude should approach this codebase going forward.

Separation of concerns: React renders data, FastAPI owns business logic. Security requirements: no dangerouslySetInnerHTML, no eval, no inline scripts. Component reuse strategy: check existing primitives before building new ones. Development vs. production environment parity: SQLite doesn't enforce foreign keys like PostgreSQL does, and Digital Ocean strips API prefixes.

That last one? It's the kind of thing you only know from shipping production code and watching it fail. The directive included specific banned patterns, required practices, and a migration strategy for existing code. It documented that foreign key violations that work locally will crash in production. It specified that all API routes need to be registered twice (once with the /api prefix for development, once without for Digital Ocean's deployment behavior).

At first, I had to start every Claude Code session with "read the CleanSmart Architecture Directive.md in docs/ before we begin." Tedious, but necessary. Then Claude added project instructions as a feature. The directive became part of Claude's context automatically. One less manual step, and the guardrails stayed in place without me babysitting.

Creating the directive four weeks in meant I'd already written code that needed refactoring. More rework that proper upfront planning would have prevented. But once the directive existed and Claude internalized it, the codebase stayed clean. The Codex reviews started coming back with fewer issues. The second half of development was dramatically smoother than the first.

Someone building their first app wouldn't know how to create this. They wouldn't know why it matters. They'd deploy to production and spend days debugging issues that the directive prevented me from encountering. Or they'd ship a codebase that becomes untouchable within months.

I transferred 20 years of hard-won software development wisdom into Claude's operating instructions for this project. That's not "vibe coding." That's treating AI as a skilled executor that still needs expert direction. I just wish I'd done it on day one instead of week four.

The Workflow That Actually Worked

I stopped prompting Claude to build features. I started using it to interrogate my thinking.

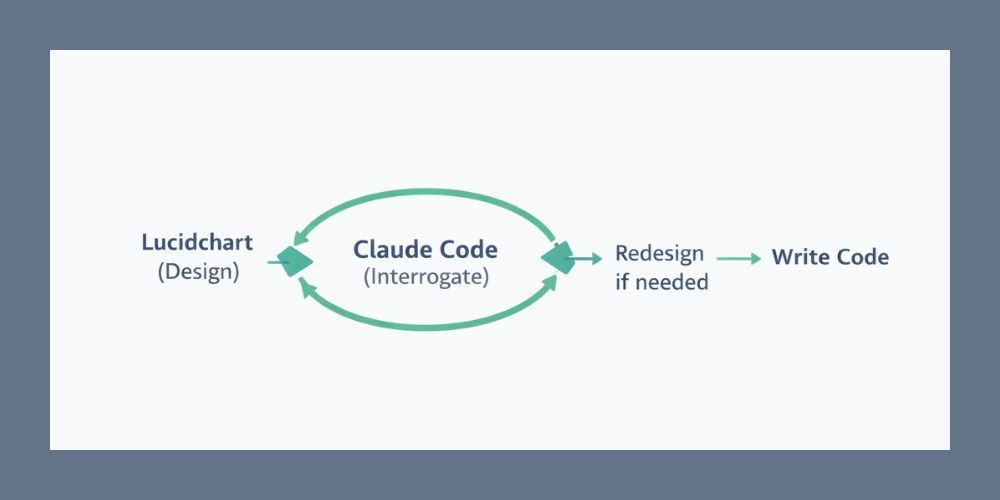

The shift happened mid-project. Instead of jumping from idea to code, I'd create user flow diagrams and data flow diagrams in Lucidchart outside of Claude (or sometimes on paper or whiteboard, whatever). Then I'd bring them into Claude Code and ask it to challenge them. Where does this break? What edge cases am I missing? What happens when the user does X instead of Y?

Sometimes Claude would push back and I'd realize my design was incomplete. I'd go back outside Claude, redesign, and return for another round of interrogation. Only after the architecture survived scrutiny did we write code.

Then Claude Code introduced planning mode. Game-changer.

Before planning mode, I'd go to Claude or ChatGPT, describe the features and functionality I wanted, think through expected outcomes and potential errors, then craft prompts that would tell Claude Code how to implement everything. That entire step disappeared once planning mode became available. I could do all of that directly inside Claude Code, with Claude as a partner in thinking through the requirements before any code existed.

The tool caught up to the workflow I'd developed out of necessity.

The Speed Trap

Here's the counterintuitive insight that took me weeks to internalize: AI coding tools are so fast that they make you feel like you can skip the design phase. Why diagram when Claude can build it in 10 minutes?

The speed is a mirage.

You end up building the wrong thing faster. Then you spend days fixing what proper planning would have prevented.

The embarrassing part? I knew this. For years at the agency, I told clients the same thing over and over: spend time upfront on planning. It's worth it. Don't rush to design concepts or start developing the website without sitemaps, wireframes, user testing on wireframes. The clients who pushed back ("we don't have time for all that") always ended up spending more time on rework than the planning would have cost.

And there I was, doing exactly what I told them not to do.

The speed of AI-assisted development is intoxicating. Claude builds something in minutes that would have taken days. You feel productive. You feel like you're making progress. The dopamine hit of watching features materialize is real. And it tricks you into thinking the fundamentals don't apply anymore.

They still apply. The discipline to slow down when the tool screams "go fast" is hard-won. And it's not something a weekend builder would know to do. Or an experienced developer would remember to do, apparently, until he's three weeks deep in rework wondering why he ignored his own advice.

Where It Is Today

The beta launched January 3rd. After weeks of testing, CleanSmart works like I intended when I started. In some aspects, better than I thought it could.

The semantic duplicate detection catches matches that traditional tools miss. The confidence scoring routes the right decisions to humans. The multi-source merging handles the complexity of combining data from different systems without losing fidelity. The audit trail logs every change for compliance.

It's not a demo. It's not a prototype. It's a product that solves a real problem for businesses drowning in dirty data.

And it took four months of focused effort from someone who already knew how to build software. Someone who'd spent a decade watching the exact problem play out at company after company.

The MarTech consulting taught me what needed to exist. The software development experience let me build it. Claude Code accelerated the process. But none of those pieces worked in isolation.

What This Means For You

I'm not saying "don't use AI coding tools." I'm saying stop believing you can skip the fundamentals.

If you're a developer or technical product manager evaluating Claude Code or similar tools, here's what I'd tell you:

- Treat the tool like a team member who needs clear requirements. The clearer your specs, the better the output.

- Create an architecture directive before you start. Codify your standards, security requirements, and patterns. Make your expertise transferable to the AI.

- Design before you build. User flows, data flows, expected outcomes. The diagrams feel like overhead until you skip them and spend three weeks on rework.

- Use AI to interrogate your thinking, not just execute it. The planning and critique functions are as valuable as the code generation.

- Bring your domain knowledge. Claude can write code. It can't tell you what your customers actually need. That's on you.

And if someone tells you they built a production SaaS in an afternoon with no coding experience, ask to see their architecture. Ask about their deployment environment. Ask what happens when a user does something unexpected. Ask if they've ever sat across from an actual customer who needs to use the thing.

Then watch them change the subject.

7-day free trial, no credit card required.

William Flaiz is the founder of CleanSmart, an AI-powered data cleaning platform built for Marketing Ops, RevOps, and SalesOps teams at growing businesses. He's spent 20+ years in enterprise MarTech and digital transformation, including leadership roles that drove over $200M in operational savings. He holds MIT's Applied Generative AI certification and writes about the realities of AI-assisted product development, data quality, and MarTech that actually works. Connect with him on LinkedIn or at williamflaiz.com.