Semantic Duplicate Detection: A Gentle Intro to Embeddings

Your database has duplicates. You know it does. The question is whether your current approach to finding them actually works, or whether it's catching the easy ones and missing everything else.

Most duplicate detection starts with string matching. Compare two text fields character by character, calculate a similarity score, flag anything above a threshold. It works. Sort of. It catches "Acme Corp" vs "Acme Corp." and maybe even "Acme Corporation" vs "Acme Corp." if your fuzzy matching is decent.

But it completely misses "Acme Corp" vs "ACME Technologies" vs "Bob's old company (Acme)." Those are the duplicates that actually cause problems, because they're the ones nobody catches during a manual review either.

This is where semantic duplicate detection comes in. And the technology behind it, embeddings, is less complicated than it sounds.

String Matching: Where It Breaks

Traditional duplicate detection compares strings. Character by character, token by token. The algorithms have gotten more sophisticated over the years. Levenshtein distance counts the minimum edits needed to transform one string into another. Jaro-Winkler gives extra weight to matching characters at the beginning of strings. Soundex and Metaphone compare phonetic representations.

They're all variations on the same idea: how similar do these two strings look?

That works when duplicates look similar. "Jon Smith" and "John Smith" are one character apart. "123 Main St" and "123 Main Street" share most of their characters. A good fuzzy matching algorithm catches these without breaking a sweat.

The problem shows up when duplicates don't look similar at all. "Robert Johnson" and "Bob Johnson." "International Business Machines" and "IBM." "St. Luke's Medical Center" and "Saint Luke's Hospital." A human recognizes these as the same entity instantly. String matching doesn't, because the strings genuinely aren't similar. The characters are different. The tokens are different. The phonetics might even be different.

And then there are the structural variations that accumulate across systems. One CRM stores "Johnson & Johnson" while the ERP has "Johnson and Johnson" and accounting entered "J&J." Three records, one company, and no amount of character-level comparison will reliably connect them, at least not without an extensive library of hand-coded rules for every possible abbreviation and variation.

Hand-coded rules don't scale. You write one for "St." vs "Saint" and then discover you need one for "Ft." vs "Fort" and "Mt." vs "Mount" and "Corp." vs "Corporation" vs "Inc." vs "Incorporated." Each industry has its own abbreviation conventions. Each geography has its own naming patterns. You end up maintaining a rule library that's bigger than the matching algorithm itself.

Embeddings: The Concept

Here's the core idea behind embeddings, stripped of the jargon.

An embedding converts a piece of text into a list of numbers. Not random numbers. Numbers that capture the meaning of the text. Similar meanings produce similar numbers.

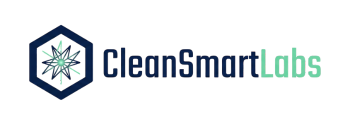

Think of it like GPS coordinates for language. "New York City" and "Manhattan" have different spellings, different character counts, different everything at the string level. But if you plotted their meanings on a map of concepts, they'd be sitting right next to each other. Embeddings are that map, except instead of two dimensions (latitude and longitude), they use hundreds of dimensions to capture all the nuances of meaning.

When you generate an embedding for "Acme Technologies LLC," you get a point in this high-dimensional space. When you generate an embedding for "ACME TECH," you get a different point, but it's close to the first one. Close enough that a simple distance calculation can identify them as probable duplicates.

The model generating these embeddings has been trained on enormous amounts of text. It's learned that "Robert" and "Bob" are the same name. That "LLC" and "Inc." both indicate a business entity. That "St." in an address means "Street" but "St." before a name probably means "Saint." All of those hand-coded rules you'd need for string matching? The embedding model has internalized them through training data.

This doesn't require you to run a massive AI system. Pre-trained embedding models are widely available, and generating embeddings for your records is computationally straightforward. You're not training a model. You're using one that already exists to convert your text into comparable numerical representations.

How Clustering Duplicates Works

Once every record has an embedding (that list of numbers representing its meaning), finding duplicates becomes a distance calculation.

The simplest version: compare every record's embedding to every other record's embedding. If the distance between two embeddings is below a threshold, flag them as potential duplicates. This is called cosine similarity, and it measures the angle between two vectors in that high-dimensional space. A cosine similarity of 1.0 means identical meaning. A score of 0.0 means completely unrelated.

The obvious problem with comparing every record to every other record is scale. Ten thousand records means 50 million comparisons. A hundred thousand records means 5 billion. That's not practical.

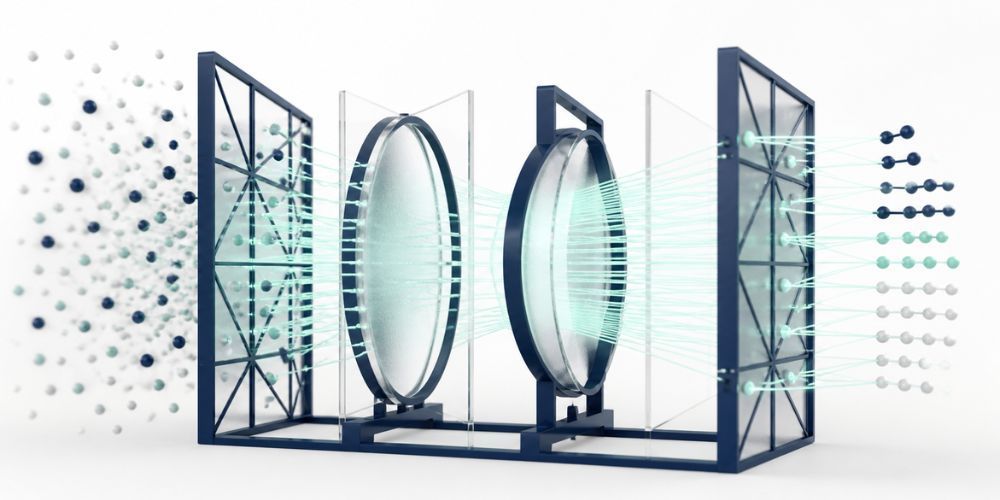

Clustering solves this. Instead of comparing everything to everything, you first group records into clusters based on rough similarity, then only compare records within the same cluster. Think of it as sorting your records into buckets by general category before doing the detailed comparison.

Blocking is the term you'll hear most often. You "block" on a specific attribute, like the first three characters of a company name, or a zip code, or an industry code, then run detailed matching only within each block. If "Acme Technologies" and "ACME TECH" both land in the "ACM" block, they get compared. A record starting with "Zebra" never gets compared to them, saving millions of unnecessary calculations.

More sophisticated approaches use the embeddings themselves for blocking. Approximate nearest neighbor algorithms (the most common is called FAISS, built by Meta) can quickly identify the closest embeddings to any given point without comparing against the full dataset. This is how search engines work at scale, and the same technique applies to duplicate detection.

The practical workflow looks like this: generate embeddings for all records, index them using an approximate nearest neighbor algorithm, then for each record, retrieve the top N most similar records and evaluate whether they're genuine duplicates. The N is usually small, maybe 5 or 10, which keeps the comparison count manageable even for large datasets.

Threshold Tuning: The Art of the Cutoff

Here's where it gets tricky. You need to decide: how similar is similar enough?

Set your similarity threshold too high (say 0.98) and you'll only catch near-identical records. You'll have very few false positives, but you'll miss the "Robert Johnson" / "Bob Johnson" matches that were the whole point of using semantic matching in the first place.

Set it too low (say 0.70) and you'll flag records that aren't actually duplicates. "Johnson Controls" and "Johnson & Johnson" might score a 0.75 because they share a common name, but they're completely different companies. Reviewing false positives wastes time and erodes trust in the system.

The right threshold depends on your data. There's no universal number.

For vendor masters with lots of abbreviation variations, a threshold around 0.82-0.88 often works well. For contact records where you're matching on full names plus company, you might push it higher, to 0.90-0.95, because there's more text to compare and the signal is stronger.

The best approach is to start with a batch of known duplicates, records your team has already identified and merged manually. Run the embedding comparison on those records and see what similarity scores they produce. That gives you an empirical baseline. If your known duplicates consistently score above 0.85, that's your starting threshold.

Then tune it iteratively. Run the matching at your initial threshold. Review the flagged pairs. Count how many are genuine duplicates (true positives) and how many aren't (false positives). Adjust the threshold up or down and repeat. Most teams converge on a good threshold within two or three iterations.

One thing that helps enormously: don't try to auto-merge at any threshold. Flag and review. Let humans make the final call on whether two records represent the same entity. The embedding similarity score gets you from 50 million possible comparisons down to a few hundred that deserve human attention. That's the value. Trying to eliminate human review entirely is where duplicate detection projects go sideways.

Measuring How Well It Works

You've built your semantic matching pipeline. You've tuned your threshold. How do you know if it's actually performing?

Three metrics matter.

Precision answers: of the pairs we flagged as duplicates, how many actually were? If you flagged 100 pairs and 85 were genuine duplicates, your precision is 85%. Low precision means your team wastes time reviewing false matches.

Recall answers: of all the duplicates that exist, how many did we find? If your database contains 200 duplicate pairs and you caught 160 of them, your recall is 80%. Low recall means duplicates are slipping through.

F1 score is the harmonic mean of precision and recall. It's a single number that balances both concerns. A high F1 means you're catching most duplicates without drowning in false positives.

The tension between precision and recall maps directly to your threshold decision. Raising the threshold increases precision (fewer false positives) but decreases recall (more missed duplicates). Lowering it does the opposite. The F1 score helps you find the balance point.

For most business applications, you want precision above 80% and recall above 75%. That means your review queue is mostly genuine duplicates, and most of the duplicates in your database are getting caught. Perfect scores aren't realistic, and chasing them leads to over-tuning that doesn't generalize to new data.

Track these metrics over time, not just at initial deployment. As new records enter your system and naming conventions shift, your model's performance will drift. A quarterly evaluation against a fresh sample of known duplicates keeps your thresholds calibrated.

From Theory to Practice

The gap between understanding embeddings conceptually and deploying them against your actual data is smaller than you'd expect. The hard parts, training the embedding model and building the approximate nearest neighbor infrastructure, are solved problems with off-the-shelf tools.

The parts that require your attention are the ones specific to your data: which fields to embed, how to combine multiple fields into a single comparison, what threshold works for your particular mix of records, and how to build a review workflow that your team will actually use.

CleanSmart's SmartMatch handles this without requiring you to configure embedding models or tune infrastructure. It uses semantic similarity to identify duplicates that string matching misses, catching the abbreviation variations, legal suffix differences, and naming inconsistencies that live in every real-world database. Results get surfaced for human review with similarity scores and matched field breakdowns, so your team can make confident merge decisions without second-guessing the algorithm.

The technology behind semantic duplicate detection is sophisticated. Using it doesn't have to be.

What's the difference between fuzzy matching and semantic duplicate detection?

Fuzzy matching compares the characters in two strings and calculates how similar they look. It catches typos, minor spelling variations, and formatting differences. Semantic matching compares the meaning of two strings using embeddings. It catches conceptual matches that look nothing alike at the character level, like "IBM" and "International Business Machines" or "Bob" and "Robert." Most effective duplicate detection uses both: fuzzy matching for the easy catches and semantic matching for the ones that fuzzy matching misses.

Do I need machine learning expertise to use semantic duplicate detection?

No. Pre-trained embedding models are available off the shelf, and tools like CleanSmart abstract the complexity entirely. You don't need to train models, tune hyperparameters, or manage infrastructure. The expertise you do need is domain knowledge about your data: understanding which fields matter for matching, what constitutes a genuine duplicate in your context, and how to structure a review workflow. That's business knowledge, not ML knowledge.

How accurate is semantic duplicate detection compared to manual review?

In most benchmarks, semantic matching with human review catches more duplicates than either approach alone. Automated matching at well-tuned thresholds typically achieves 80-90% recall, meaning it surfaces the vast majority of duplicates for review. Human reviewers then confirm or reject each flagged pair, which keeps precision high. Pure manual review, scrolling through sorted lists looking for duplicates, rarely exceeds 60-70% recall because humans miss non-obvious matches and lose concentration over long review sessions.

William Flaiz is the founder of CleanSmart, an AI-powered data cleaning platform built for Marketing Ops, RevOps, and SalesOps teams at growing businesses. He's spent 20+ years in enterprise MarTech and digital transformation, including leadership roles that drove over $200M in operational savings. He holds MIT's Applied Generative AI certification and writes about the realities of AI-assisted product development, data quality, and MarTech that actually works. Connect with him on LinkedIn or at williamflaiz.com.