From Spreadsheet to Single Source of Truth: A No‑Code Workflow

Spreadsheets multiply. It starts innocently enough: a marketing list here, a sales export there, a customer file someone pulls from Shopify. Before long, you're managing five versions of what should be one customer database, each with different formatting, different completeness, and different ideas about what "correct" looks like.

The answer everyone gives is "create a single source of truth." The problem is that creating one has traditionally required SQL skills, expensive middleware, or a dedicated data engineer who doesn't have time for your project.

It doesn't have to be that complicated.

What a Single Source of Truth Actually Means

A single source of truth isn't a specific tool or database. It's a principle: every question about your data should have exactly one authoritative answer.

When your marketing team asks "how many active customers do we have," they shouldn't get a different number than sales. When someone looks up a contact's phone number, they shouldn't find three different formats across three different systems. When your email platform pulls customer data, it should pull from the same place your CRM does.

Most businesses don't have this. They have data scattered across tools, each tool slightly out of sync with the others. The result is wasted time reconciling differences, wrong decisions based on stale information, and a lingering distrust of any number anyone quotes in a meeting.

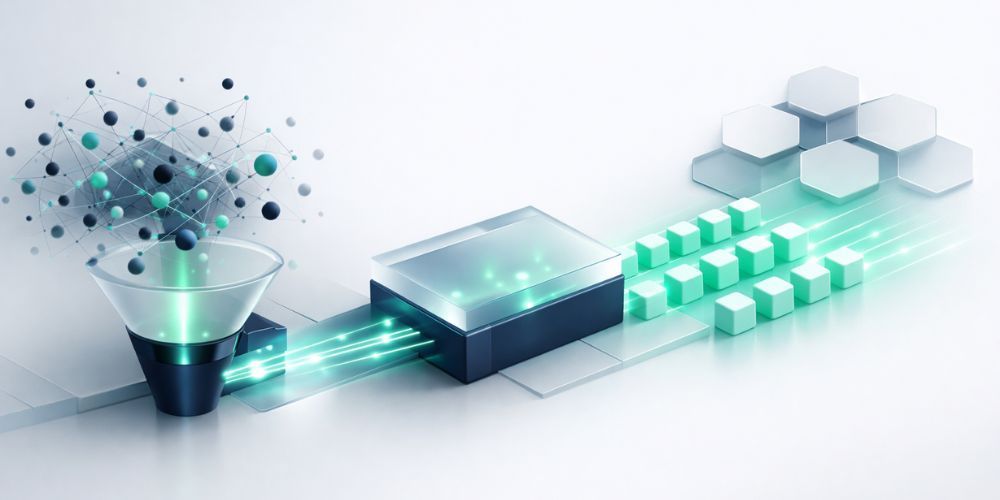

The No-Code Path to Clean, Unified Data

Creating a single source of truth used to require custom code. You'd write scripts to extract data from each source, transform it into a common format, and load it into a central database. The ETL pipeline became someone's full-time job to maintain.

No-code data cleaning tools have changed this. You can now take raw CSVs from multiple sources, run them through an automated cleaning process, and produce a unified dataset without writing a line of code. The entire workflow happens through a visual interface.

Here's what that process looks like in practice.

Step 1: Start With Your Raw Files

Gather exports from every system that contains relevant data. For a customer database, this might mean:

- A CSV from your email marketing platform

- A contact export from your CRM

- A customer list from your e-commerce platform

- That spreadsheet the sales team maintains in Google Sheets

Don't clean anything yet. Upload the files as they are. You need to see the full scope of inconsistency before you can fix it.

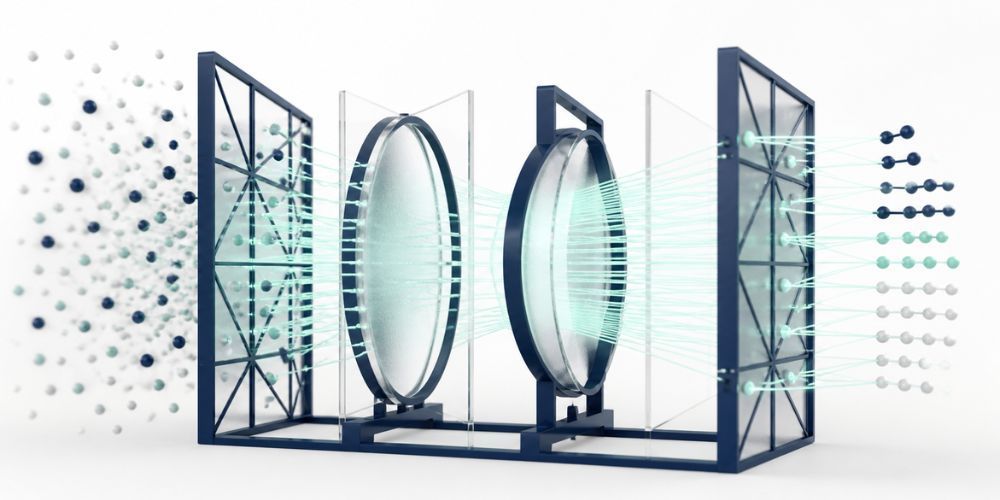

Step 2: Let Automation Handle the First Pass

Modern data cleaning tools can handle the mechanical work automatically. This includes:

Duplicate detection: Finding records that represent the same person or company, even when spelled differently. "Jon Smith" and "John Smith" at the same email domain are probably the same person. AI-powered matching catches what simple string comparison misses.

Format standardization: Normalizing phone numbers to a consistent format, fixing capitalization on names, standardizing date formats. The kind of cleanup that takes hours when done manually but seconds when automated.

Missing value prediction: Filling gaps based on patterns in existing data. If 90% of records with ZIP code 90210 are in Beverly Hills, CA, a tool can reasonably fill in those fields for records that only have the ZIP.

Anomaly flagging: Identifying values that look wrong. An age of 250. A negative order total. A phone number with the wrong number of digits. These get flagged for review rather than silently accepted.

The key word here is "first pass." Automation handles the obvious cleanup. What remains is the judgment calls that require human input.

Step 3: Review Before Committing

This is where no-code workflows differ from black-box automation. Good tools show you exactly what they're about to change and let you approve or reject each modification.

A preview interface might show:

- "These 47 records will be merged as duplicates (confidence: 94%)"

- "Phone number (555) 123-4567 will be standardized to +1 555-123-4567"

- "Missing city field will be filled based on ZIP code match"

You review the suggestions. Accept the ones that look right. Reject the ones that don't. Override where needed.

This preview step matters more than it might seem. Data cleaning involves judgment. "Robert" and "Bob" might be the same person, or they might be a father and son at the same company. A human can tell the difference in context. The software can't, but it can surface the decision for you to make.

Step 4: Use Undo as Your Safety Net

Mistakes happen. Someone approves the wrong merge. A format change breaks an integration. A confident prediction turns out to be wrong.

The fix shouldn't require restoring from backup or re-importing raw data. Look for tools that maintain complete change histories, letting you undo specific operations without losing other work. Changed a phone format and it broke your dialer? Undo that specific change. Everything else stays.

This safety net is what makes aggressive data cleaning possible. You can experiment knowing that nothing is permanent until you're satisfied with the result.

Building Trust With Audit Trails

A single source of truth only works if people trust it. That trust comes from transparency: being able to answer "where did this data come from?" and "why does it look like this?" at any moment.

Audit trails track:

- Source attribution: Which original file contributed this record

- Change history: Every modification, when it happened, and why

- Confidence scores: How certain the system was about automated changes

- Human decisions: Which merges and corrections were manually approved

When someone questions a number in a report, you can trace it back to source files. When compliance asks how customer data is maintained, you have documentation. When a value looks wrong six months from now, you can see exactly when and how it changed.

This isn't just good practice. For businesses with GDPR, CCPA, or other data privacy obligations, it's increasingly a requirement.

Connecting to the Rest of Your Stack

A single source of truth is useless if it sits in isolation. The cleaned, unified dataset needs to flow back into the tools your team actually uses.

The handoff typically works in two directions:

Downstream to operational tools: Push cleaned data back to your CRM, email platform, or e-commerce system. This might mean generating platform-specific export files that match each tool's import requirements, or connecting via direct integration to sync changes automatically.

Upstream to analysis tools: Feed the unified dataset into your BI platform, reporting dashboards, or analytics tools. When everyone analyzes the same underlying data, reports finally start agreeing with each other.

The mechanics vary by tool. Some platforms offer native connectors. Others require export files that you import manually. The important thing is that the flow is documented and repeatable. Your single source of truth should update the rest of your stack through a defined process, not ad-hoc file sharing.

Governance: Keeping the Source of Truth Accurate

Creating a single source of truth is a project. Maintaining it is a process.

Data degrades over time. People change jobs and their contact information becomes outdated. New records get added through forms and imports without going through the cleaning pipeline. Edge cases slip through that the original rules didn't anticipate.

Governance practices to consider:

Regular cleaning cycles: Run your data through the cleaning process on a schedule, weekly or monthly depending on volume. New duplicates and format issues will emerge. Catch them before they compound.

Input validation: Where possible, enforce data quality at the point of entry. Form fields that validate email formats. Dropdown menus instead of free text for common values. The less cleanup required after the fact, the better.

Defined ownership: Someone needs to be responsible for data quality. This doesn't mean doing all the work personally. It means having authority to enforce standards and resolve disputes about what "correct" looks like.

Documented standards: Write down your formatting conventions, your merge rules, your source priority hierarchy. When someone new joins the team, they should be able to understand how data is maintained without an oral history lesson.

What This Looks Like With CleanSmart

This is exactly the workflow CleanSmart supports.

Upload your CSVs from any source. SmartMatch finds duplicates using semantic similarity that catches name variations and typos. AutoFormat standardizes phone numbers, emails, dates, and addresses automatically. SmartFill predicts missing values based on patterns in your existing data. LogicGuard flags anomalies for review.

Every change is previewed before it's applied. Every modification is logged in a complete audit trail. You can undo any operation without losing other work. When you're done, export the unified dataset or push it directly to connected platforms.

No code. No complex pipeline to maintain. Just clean data that everyone can trust.

How is a single source of truth different from a data warehouse?

A data warehouse is a technical solution, a centralized database where data from multiple sources is stored for analysis. A single source of truth is a principle, the idea that every data question has one authoritative answer. You can build a single source of truth using a data warehouse, but you can also achieve it with simpler approaches like maintaining one master spreadsheet that feeds all other tools. The principle matters more than the specific technology.

What if different teams need data in different formats?

The single source of truth is your canonical version, the one that's definitively correct. Teams can still receive data formatted for their specific tools. A CRM might need phone numbers in one format while a dialer needs another. The key is that both formats derive from the same underlying source. Changes to the source propagate to all downstream formats.

How often should I update my single source of truth?

Frequency depends on how fast your data changes. E-commerce businesses with daily transactions might need daily updates. B2B companies with longer sales cycles might update weekly or monthly. The right cadence is often enough that no one is making decisions on stale data, but not so frequent that updates become disruptive. Start with weekly and adjust based on what you learn.

William Flaiz is the founder of CleanSmart, an AI-powered data cleaning platform built for Marketing Ops, RevOps, and SalesOps teams at growing businesses. He's spent 20+ years in enterprise MarTech and digital transformation, including leadership roles that drove over $200M in operational savings. He holds MIT's Applied Generative AI certification and writes about the realities of AI-assisted product development, data quality, and MarTech that actually works. Connect with him on LinkedIn or at williamflaiz.com.