AI-Powered Data Cleaning: How Machine Learning Catches What Rules Miss

Here's a scenario I've watched play out at probably a dozen client engagements. The marketing team runs a deduplication tool against their CRM. The tool reports back: 47 duplicates found, 47 duplicates merged. Everyone's happy. The list is clean.

Except it isn't.

Three weeks later, sales is still complaining about chasing the same leads twice. Marketing notices their email open rates haven't budged. Someone pulls a sample of the "cleaned" data and finds Robert Chen at Acme Corp and Bob Chen at Acme Corp sitting two rows apart, both still active, both still getting the same nurture sequence. The traditional dedupe tool saw two different first names and treated them as two different people.

This is the gap between rule-based data cleaning and what people are now calling AI-powered data cleaning. It's not a marketing buzzword. There's a real technical difference, and that difference shows up in your numbers.

Let's talk about what's actually happening under the hood, where AI helps, where it doesn't, and how to evaluate tools that claim to use it.

The Limits of Rule-Based Cleaning

Traditional data cleaning tools work on rules you can describe in a sentence. Match records where the email address is identical. Flag any phone number that doesn't have 10 digits. Standardize state names to their two-letter abbreviation.

These rules work great for a specific kind of problem: data that's wrong in predictable, structured ways. If your problem is that some records have phone numbers formatted as (555) 123-4567 and others as 555.123.4567, a rule-based tool will fix that all day long.

The trouble starts when your data is wrong in human ways.

Consider these record pairs:

- Robert Chen at acme.com and Bob Chen at acme.com.

- IBM Corporation in your customer table and International Business Machines in your prospect table.

- Kristin Mueller and Kristen Mueller, both at the same company, both with phone numbers that differ only in their last digit because someone made a typo during data entry.

No rule-based system catches these reliably. You can write fuzzy matching rules with tolerance thresholds, but you end up in a frustrating spot. Set the threshold too loose and you start merging genuinely different people. Set it too tight and you miss the obvious cases your sales team will find embarrassing.

I've watched ops teams spend weeks tuning matching rules and still end up with a 30 to 40 percent miss rate on duplicates that any human reviewing the data would catch in five seconds.

What "AI-Powered" Actually Means

The term gets thrown around so much it's lost meaning. Let me cut through it.

When a data cleaning tool says it uses AI, what's usually happening is one of two things. The first is some flavor of machine learning model that's been trained on millions of examples of how data values relate to each other. The second is the use of language models or embedding models that can compare meaning, not just characters.

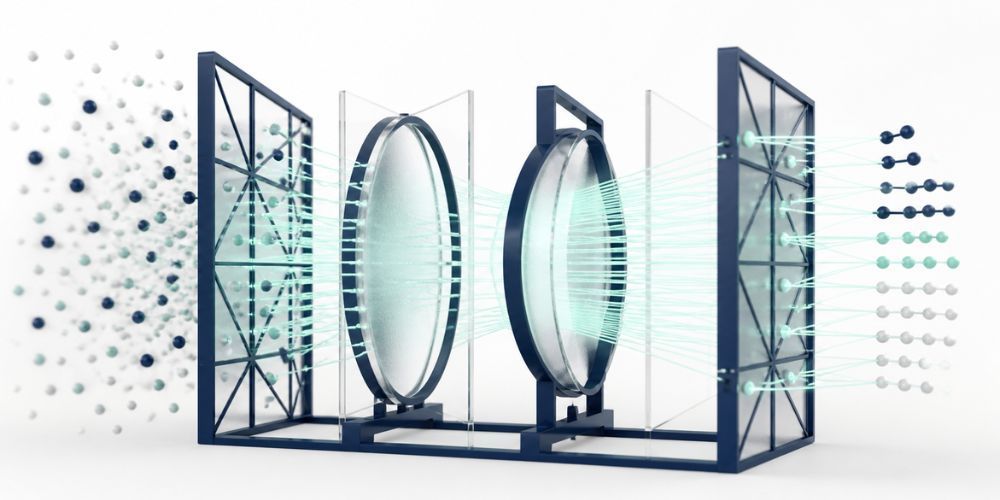

The most useful application for data cleaning is something called semantic similarity. Instead of comparing two strings letter by letter, the system converts each value into a mathematical representation that captures what it means. Then it compares those representations.

This is how the system knows that Robert and Bob are likely the same person. The model has seen enough English-language data to understand that Bob is a common nickname for Robert. It's not following a rule that says "if name = Robert, also match Bob." It's recognized a pattern across billions of words.

The practical effect: AI-powered cleaning catches the duplicates and inconsistencies that traditional tools systematically miss. Not all of them. There's no perfect tool. But the gap is significant enough that once you've used semantic matching on a real customer database, going back to string-based dedupe feels like trying to clean a kitchen with a toothbrush.

Semantic Matching: Finding Duplicates That Look Different

Let's get concrete about how this works in practice.

A semantic matching engine takes each record in your dataset and converts the relevant fields into what's called an embedding. Think of an embedding as a list of numbers that represent the meaning of a value. Records with similar meanings get similar number patterns, even if the actual text is different.

When you run deduplication, the system compares these embeddings rather than the raw text. "Robert Chen, robert.chen@acme.com, VP Marketing" and "Bob Chen, bchen@acme.com, Marketing VP" produce embeddings that are mathematically close. The system flags them as a likely match.

What this catches that string matching misses:

- Nickname variations. Robert and Bob, William and Bill and Will, Margaret and Maggie and Peggy, Alexander and Alex and Sasha. There are hundreds of these and no rule-based system has them all.

- Company name variations. IBM and International Business Machines. JPMorgan and JP Morgan Chase. Apple and Apple Inc.

- Typos and minor spelling variations that fall outside what fuzzy matching tolerates. Kristin and Kristen. Stevens and Stephens. Anderson and Andersen.

- Reordered or abbreviated information. "Smith, John" and "John Smith." "123 Main Street, Apt 4" and "123 Main St #4."

The limit of semantic matching is that it requires enough context to be confident. If your only field is a single first name, even AI can't tell if Robert is the same person as Bob. Give it first name, last name, email domain, and company, and accuracy goes way up.

Confidence Scoring: Knowing When to Trust the AI

This is the part of AI-powered cleaning that matters most and gets discussed least.

A good AI cleaning tool doesn't just say "these are duplicates." It says "I'm 94 percent confident these are duplicates" or "I'm 67 percent confident these are duplicates." That confidence score is the difference between a tool you can trust and a tool that's just a faster way to make mistakes.

High-confidence matches, let's say 90 percent and above, can usually be merged automatically. The system is essentially as sure as a human reviewer would be. Letting it run on autopilot saves time without introducing real risk.

Mid-confidence matches, somewhere between 70 and 90 percent depending on your data, should be flagged for human review. These are the cases where the system suspects a match but isn't sure enough to act unilaterally. A good tool surfaces these in a queue where you can approve or reject them quickly.

Low-confidence matches, below 70 percent, usually shouldn't even be shown unless you're doing exploratory analysis. They generate too much noise.

The practical workflow this enables: you run cleaning on a list of 50,000 records, the AI handles 90 percent of the duplicates automatically, you spend 30 minutes reviewing the borderline cases, and the whole thing takes a couple of hours instead of weeks.

Full disclosure, this is how I built CleanSmart. After watching too many clients struggle with either fully automated tools that made bad decisions or fully manual processes that nobody had time for, I designed CleanSmart's SmartMatch around confidence scoring with human review for the cases that warrant it.

Anomaly Detection Beyond Simple Rules

Deduplication gets most of the attention in AI cleaning conversations, but anomaly detection is where machine learning earns its keep in a different way.

Rule-based anomaly detection works on bounds you define. Flag any age over 120. Flag any order total above $10,000. Flag any date in the future for a birthday field. Useful, but limited. You have to know in advance what "weird" looks like.

Machine learning approaches to anomaly detection work differently. Algorithms like Isolation Forest analyze the patterns in your data and identify records that don't fit those patterns, even when you haven't defined what the patterns should be.

A practical example: in a customer database, ML-based anomaly detection might flag a record where the listed company is a Fortune 500 enterprise but the email domain is a free Gmail address, the title is "Owner," and the phone number's area code doesn't match the listed state. No single field is wrong. The combination is statistically unusual enough to deserve a second look.

This is how you catch the things rule-writers didn't think of. Data entry errors that pass all the format checks. Records that were created when someone was testing a form. Imports from a legacy system that mixed real customers with sample data.

When You Still Need Human Review

AI-powered cleaning is genuinely better than rule-based for a lot of jobs. It's not magic.

There are cases where you need human judgment in the loop:

- When the cost of a false merge is high. If you're cleaning a B2B prospect list and accidentally merging two different decision-makers at a strategic account, the consequences are bigger than a marketing email going to the wrong person. Higher-stakes data deserves more review.

- When your data has unusual conventions. Industry-specific terminology, unique account naming systems, or company-internal codes won't be reflected in the training data of general-purpose models. The AI will guess based on general patterns, which may not match your specific reality.

- When confidence scores are mid-range. The whole point of confidence scoring is to flag the cases that need a human. Trust the system when it tells you it's not sure.

- When you're cleaning data you'll use for compliance reporting or executive metrics. The standard for accuracy is higher when the stakes are higher.

The right model is AI handling the volume so humans can focus on the judgment calls. Not AI replacing judgment entirely.

Choosing an AI Data Cleaning Tool

If you're evaluating tools that claim to use AI, here's what to actually look for.

Ask how the matching works under the hood. "AI-powered" with no further explanation usually means "we use some kind of fuzzy matching and called it AI for marketing." Look for vendors who can explain whether they're using embeddings, what model architecture, how they handle confidence scoring.

Test it on your real data. A demo on a vendor's curated sample set tells you nothing. Get a trial, throw your messiest 1,000 records at it, and see what happens. Pay attention to what it catches that traditional tools missed and what false positives it generates.

Look for transparent confidence scoring. The tool should tell you why it thinks records are duplicates and how confident it is. Black-box "trust us" tools are how you end up with quietly corrupted data.

Check for an audit trail. Every change should be logged with the original value, the new value, the confidence score, and the reasoning. This matters for compliance and for your own sanity when you need to figure out what happened.

Make sure there's a review workflow for low-confidence changes. If the tool just applies everything automatically with no human gate, walk away. Even 95 percent accuracy across 100,000 records means 5,000 incorrect changes you'll never catch.

The best AI data cleaning tools combine the speed and pattern recognition of machine learning with the judgment and oversight of the people who actually know your business. Anyone selling you full automation with no human review is selling you a faster way to make mistakes at scale.

How is AI data cleaning different from fuzzy matching?

Fuzzy matching compares strings character-by-character with some tolerance for variation. It catches typos and minor spelling differences but fails on things like nicknames, abbreviations, or completely different phrasings of the same thing. AI data cleaning uses semantic similarity, which compares the meaning of values rather than just their characters. This catches Robert and Bob, IBM and International Business Machines, and other variations that fuzzy matching systematically misses.

Can AI data cleaning work without training on my specific data?

Yes, and this is one of the practical advantages. Modern semantic matching uses pre-trained models that already understand language patterns from massive datasets. You don't need to train a custom model on your data to get started. The trade-off is that for very industry-specific terminology or unusual conventions, accuracy may be lower than for general business data. Most tools allow you to provide examples or feedback to improve performance over time.

Is AI data cleaning safe to run automatically?

It depends on how the tool handles confidence scoring. A well-designed AI cleaning tool will only apply high-confidence changes automatically and flag mid-confidence matches for human review. Tools that apply all changes regardless of confidence are risky, especially on important data. Look for tools that let you set confidence thresholds, provide an audit trail of every change, and offer easy ways to undo modifications.

William Flaiz is the founder of CleanSmart, an AI-powered data cleaning platform built for Marketing Ops, RevOps, and SalesOps teams at growing businesses. He's spent 20+ years in enterprise MarTech and digital transformation, including leadership roles that drove over $200M in operational savings. He holds MIT's Applied Generative AI certification and writes about the realities of AI-assisted product development, data quality, and MarTech that actually works. Connect with him on LinkedIn or at williamflaiz.com.