Real-Time vs Batch Data Cleaning: When to Use Each

Somewhere between "clean it as it arrives" and "clean it all on Sunday night," there's an approach that fits your team. The trick is figuring out which one before you build an entire pipeline around the wrong choice.

This is one of those decisions that seems straightforward until you're knee-deep in implementation. Real-time cleaning sounds better because faster is always better, right? Not necessarily. Batch cleaning sounds simpler because you can schedule it and forget it, right? Not exactly.

Both approaches have real trade-offs, and the right answer depends less on technology preferences and more on what your data actually needs.

The Timing Question

Data cleaning has a timing problem. Bad data causes more damage the longer it sits in your system. A duplicate record created Monday morning has compounded into duplicate emails, duplicate pipeline entries, and conflicting reports by Friday afternoon.

So clean it immediately, problem solved?

Not quite. Real-time cleaning requires your system to evaluate every record at the moment of entry. That means processing overhead on every form submission, every API call, every import. It means your users wait while validation runs. And it means your cleaning logic needs to be fast enough to not create bottlenecks.

Batch cleaning avoids all of that by running on a schedule: every night, every week, or before specific events like a campaign launch. Records enter the system as-is and get cleaned later. The trade-off is that bad data lives in your system until the next batch runs.

Neither approach is universally correct. Understanding when each one shines is what matters.

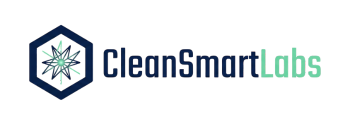

Real-Time: Validation at the Point of Entry

Real-time cleaning catches problems at the door. When someone submits a web form, imports a CSV, or creates a record through an API, real-time validation fires immediately.

What real-time handles well:

Format standardization. Phone numbers, email addresses, postal codes. These have known correct formats, and standardizing them on entry takes milliseconds. There's no reason to let "(555) 123-4567" sit in your database for a week before converting it to "+1-555-123-4567." Do it on the way in.

Obvious validation errors. An email without an "@" symbol. A date in 1899. A negative quantity on an order. These are binary checks: the data is either valid or it isn't. Catching them in real-time prevents the need to chase down corrections later.

Required field enforcement. If a record needs a company name to be useful, rejecting it at the point of entry is more effective than flagging it during a batch run when the person who created it has moved on to other things and forgotten the context.

What real-time struggles with:

Duplicate detection. Checking a new record against your entire database to determine if it's a duplicate takes time, especially with fuzzy or semantic matching. Running this in real-time for every form submission creates noticeable latency. Some real-time implementations do a quick exact-match check on entry and defer the fuzzy matching to a batch process, which is a reasonable hybrid.

Cross-record analysis. Anomaly detection, pattern analysis, and statistical outlier flagging all require looking at records in context. Is this invoice amount unusual? You can't answer that without comparing it to historical invoices. That comparison is computationally expensive and doesn't belong in a real-time workflow.

Bulk imports. When someone uploads a 10,000-row CSV, running full real-time cleaning on every row as it enters creates a terrible user experience. Bulk operations are inherently batch workflows, even in a system that otherwise favors real-time processing.

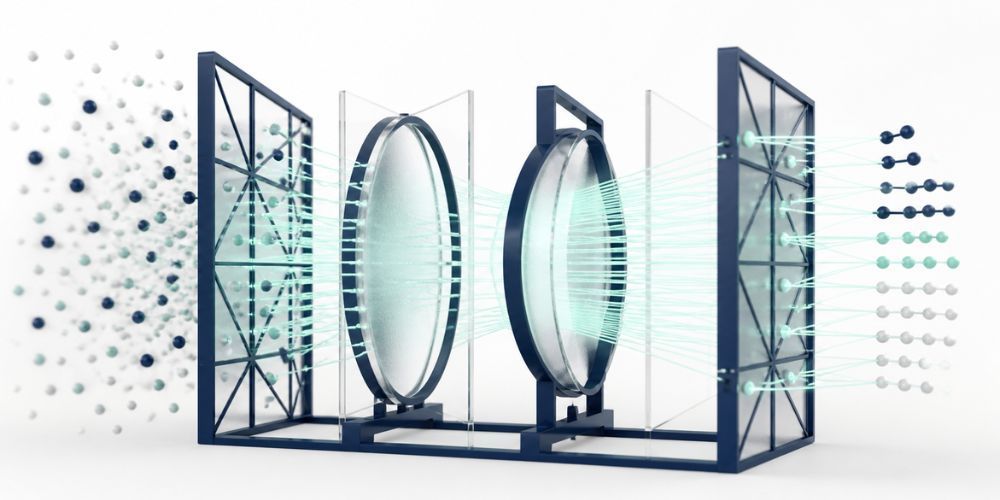

Batch: The Scheduled Cleanup

Batch cleaning processes records in groups on a schedule. Export the data (or query it in place), run your cleaning pipeline, and update the results.

Where batch cleaning excels:

Deep deduplication. Semantic matching across your entire database is a batch operation. Comparing every record against every other record (or a smart subset) requires time and compute that doesn't fit into a real-time workflow. Batch dedup runs can be thorough in ways that real-time checks can't.

Statistical analysis and anomaly detection. Identifying outliers requires calculating baselines. Baselines require looking at the full dataset, or at least a significant sample. This is naturally a batch operation: gather the data, compute the statistics, flag the anomalies.

Historical cleanup. Your database accumulated years of messy data before you started caring about quality. Cleaning historical records is a batch job by definition. You're not waiting for new data to arrive; you're processing what already exists.

Pre-event preparation. Cleaning your email list before a campaign launch. Deduplicating your CRM before a migration. Standardizing vendor records before a financial audit. These are scheduled events with specific data quality requirements, and batch cleaning fits them naturally.

Where batch falls short:

Time-sensitive data. If a customer's shipping address is wrong, waiting for the nightly batch to fix it means today's order goes to the wrong place. Some corrections need to happen faster than your batch schedule allows.

User expectations. When someone manually corrects a record, they expect the correction to be reflected immediately. Running that correction through a batch pipeline that won't execute until midnight is confusing and erodes trust in the system.

Compounding errors. The longer bad data lives in your system, the more downstream processes consume it. Reports built between batch runs contain whatever data quality issues exist at that moment. If your batch runs weekly, you're producing six days of reports based on partially dirty data.

The Hybrid Approach (What Most Teams Actually Need)

Most production environments end up with a hybrid: real-time validation for fast, definitive checks, and batch processing for complex analysis.

A practical hybrid looks like this:

On entry (real-time): Format standardization, required field validation, basic type checking, exact-match duplicate flagging. These are fast, unambiguous, and don't require cross-record analysis.

Continuous/near-real-time (minutes to hours): Quick duplicate checks against recently created records, basic anomaly flagging against cached statistics. Not truly instantaneous, but fast enough that problems don't compound.

Scheduled batch (daily/weekly): Full semantic deduplication across the entire database, statistical anomaly detection with recalculated baselines, historical data cleanup, and pre-event quality sweeps.

The separation matters because it aligns processing cost with processing value. Format standardization is cheap and immediately valuable. Do it in real-time. Semantic deduplication is expensive and benefits from full-dataset context. Do it in batch. Forcing expensive operations into real-time creates performance problems. Deferring cheap operations to batch creates data quality gaps.

Trade-offs: Speed vs. Thoroughness

The fundamental tension:

Real-time is faster but shallower. You catch problems immediately, but your cleaning logic needs to be simple enough to execute in milliseconds. Complex matching, statistical analysis, and cross-record validation don't fit the time budget.

Batch is slower but deeper. You have time for thorough analysis, but problems compound between runs. A duplicate created on Monday morning might not get caught until Sunday night's batch, by which point sales has already contacted the same prospect twice.

Resource considerations also differ significantly. Real-time cleaning consumes resources proportionally to your data ingestion rate. During high-volume periods (end of quarter, campaign launches, bulk imports), your cleaning system needs to scale with your data volume. Batch processing lets you control when compute resources are consumed, scheduling intensive operations during off-hours when system load is low.

Decision Framework: Choosing Your Approach

When deciding between real-time, batch, or hybrid:

Go real-time when your data is primarily user-entered (forms, CRM inputs), you need immediate feedback on data quality, the cleaning operations are fast and deterministic, and bad data has immediate downstream consequences.

Go batch when your data arrives in large volumes (imports, integrations), the cleaning requires cross-record analysis, your team works in campaign cycles with defined preparation periods, and you're doing historical cleanup of existing data.

Go hybrid when you have both user-entered and bulk data sources, you need both fast validation and deep analysis, your data volume varies significantly over time, and you want real-time catches for the obvious stuff and batch processing for the complex stuff.

For most teams, hybrid is the answer. The question is where to draw the line between what happens in real-time and what gets deferred to batch.

Scaling: From Batch to Hybrid to Real-Time

Many teams start with batch because it's simpler to implement. You can build a cleaning pipeline, run it manually, and iterate on the logic before investing in real-time infrastructure.

As your data volume grows and the cost of delayed cleaning becomes clearer, you start adding real-time components. Format standardization moves to input validation. Basic duplicate checking happens at record creation. Required fields get enforced in forms.

Eventually, the batch components become more sophisticated, not less. Your nightly run handles deduplication with semantic matching, anomaly detection with statistical baselines, and cross-source reconciliation. The real-time layer catches the simple stuff. The batch layer handles the complex stuff. They complement each other.

CleanSmart supports both modes. For batch workflows, upload your data and run the full cleaning pipeline: SmartMatch for deduplication, AutoFormat for standardization, SmartFill for missing values, and LogicGuard for anomaly detection. For teams building toward real-time, CleanSmart's API handles individual record validation and format standardization at the point of entry. Start with batch, add real-time as your needs grow.

Is real-time data cleaning always better than batch?

No. Real-time is faster but limited in the depth of analysis it can perform. Deep deduplication with semantic matching, statistical anomaly detection, and cross-record analysis all require batch processing because they depend on analyzing records in context. Most teams benefit from a hybrid approach: real-time for fast validation checks and format standardization, batch for thorough analysis.

How often should batch data cleaning run?

It depends on how quickly bad data causes problems in your workflows. Daily batch runs work well for teams that need relatively fresh data quality. Weekly runs are fine for teams with lower data volume or less time-sensitive operations. Before major events (campaigns, migrations, audits), run a batch cleaning regardless of schedule.

Can I switch from batch to real-time cleaning later?

Yes, and this is the recommended path for most teams. Start with batch to understand your data quality patterns and tune your cleaning rules. Then progressively move simpler, faster operations (format standardization, required field checks) to real-time while keeping complex operations (deduplication, anomaly detection) in batch. This incremental approach avoids the risk of building real-time infrastructure for cleaning rules that haven't been tested yet.

William Flaiz is the founder of CleanSmart, an AI-powered data cleaning platform built for Marketing Ops, RevOps, and SalesOps teams at growing businesses. He's spent 20+ years in enterprise MarTech and digital transformation, including leadership roles that drove over $200M in operational savings. He holds MIT's Applied Generative AI certification and writes about the realities of AI-assisted product development, data quality, and MarTech that actually works. Connect with him on LinkedIn or at williamflaiz.com.